How to Optimize my Robot Camera?

Posted on Jun 27, 2016 7:00 AM. 5 min read time

Whether you have already automated a process and want to improve it, or you’re looking to automate another process, you might have thought “hum, should I add a vision sensor or not?”. Well, here are a few things a vision system will help you accomplish. Hope you can decide afterwards what is best for you!

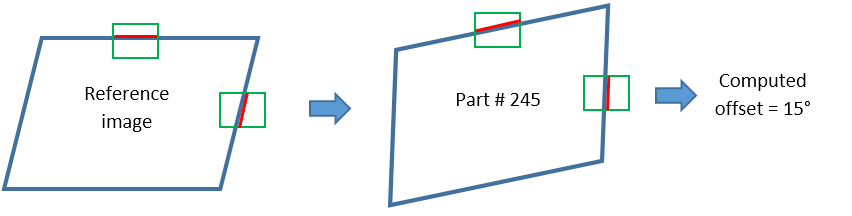

It Lets you see if you’re Offset

If you have programmed a path for the robot, and you make it run on your normal production, you might end up being a little offset on some parts. A lot of variables can occur and influence the way the part should be grabbed: it could have translated a bit from its normal position; its orientation might have been modified in the bin it was resting in. Actually, I’m sure you can think of a lot of things that could have modified the exact place where the part is vs where it was supposed to be. Using vision helps you compensate for those variations.

To compensate for offsets, you will need to detect elements of reference, for example part edges, and use them to compute an offset, based on their variation from the reference image. Depending on your application, this offset could either be sent back to the robot, to adjust its path, or it could be used for adjustment in the image processing. You can see this feature of machine vision as increased reliability.

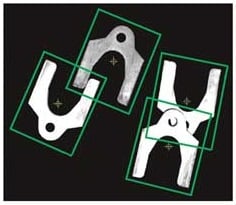

It Lets you Adapt to the Part

We have talked about offsets that the vision system will let you manage. But there are all kinds of other variations you might need to compensate for. The shape of the part will probably be a little different from one part to another: its corner might be a little more twisted than it usually is, it could be a little wider, its outline might be a little different, etc.

For processes where the robot is in contact with the part, you could use a force-torque sensor to let you adapt to the specific geometry of the part. For non-contact processes, however, a vision system will let you accomplish something similar, by computing offsets and sending this information back to the robot, that will in turn adjust the path consequently.

It Lets you See if Something Is Unusual

This might not be the first thing we think about when we decide to include vision in an automation system. It is usually an added bonus to the fact that you have a visual sensor in the system. Here are some examples of things I’ve seen in the past that might have gone unnoticed by the robot if it weren’t for the visual system:

- The part was not picked up by the robot. So the robot got in front of the camera, empty-handed – it would have gone through the whole inspection routine if it wasn’t for a quick overall check that was made on picture #1.

- A section of the part hadn’t been cut off. Wow, great thing this was captured by the camera, otherwise it could have damaged some of the equipment, since the part was longer than usual.

- The first picture was completely dark. Light failure? Light synchronization problem? One thing is sure: the part was not properly inspected using this image J Good thing this was trapped early on!

- There’s a very obvious hole in the part. This is not the kind of defect that was listed in the spec as a ‘common defect’, thus it was not classified by the system, but the image processing flagged it up as ‘something really weird’. This is a very good example of an anomaly that can be trapped early in the production line. Suppose that this very defective part would have gone on to the next processing steps, and would have been labeled as being bad only at the final inspection stage. Money would have been spent on processing a part that was doomed in the first place.

It Lets you Keep the Process Very Similar to What it Currently Is

One challenge of automation is to rethink your process in terms of automated tasks. You have been doing the same process for years, with an expert operator that was using his two hands, two eyes and (especially) his brain (and expertise embedded in it) to accomplish something specific. Now that you want to automate (and don’t want to spend too much money on it), you are left with a robot with one or two hands, no eyes, and a brain that yet needs to be programmed? Surely, you will need to rethink your whole process in order to make it work properly. Well, that’s true! One thing that might help though is to add some sensors to ease the work. Having a vision sensor on the robot for a pick and place operation, for example, will help to have some flexibility: you will be able to work with the current disorderly bins and the process will require less path programming. Of course, machine vision is not some magical trick – ideally, don’t go and pile up randomly 4 parts that will overlap, or you will find machine vision doesn’t work so well.

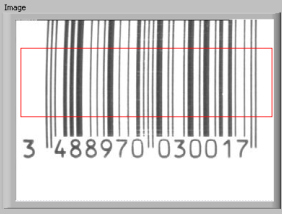

It Can Do Many, Many Things!

So far we have seen that a vision system can help you flag up something unusual, adapt to the part’s specific shape and check for offsets. But really, it can do a lot more than that! Here are a few things vision can do:

Measure the part: Its width, height, thickness, diameter, etc.

Count features, or count the number of parts: Count holes in a plate or count pills/muffins/screws

Locate the part: Similar to checking for offsets, but in this case, the robot path isn’t preprogrammed and offset. It can be built along the way, as the camera detects various parts.

Inspect the part: Check for visual defects (cracks, pits, bumps, rust, labels), correct color, intact packaging, correct alignment, etc.

Read or decode: Bar codes (1D or 2D), characters, etc.

Conclusion

Vision systems can be the main focus in your automation (e.g. for inspection purposes), but it can also act as a great “right-hand man”, by adding information that will help you accomplish your task by hand. Of course, integrating a vision system comes with its own challenges. It can be very sensitive to the surrounding elements and become hard to implement, but it can also be as easy as 1-2-3 in other cases. Read about Robotiq's new vision system that we just launched last week.

Leave a comment