How Will Vision Technologies Evolve for Collaborative Robots in 2017 and Beyond?

Posted on May 30, 2017 7:00 AM. 2 min read time

Industrial robots have, for the longest time, been blind in a literal sense. They didn’t really need vision to perform their tasks, so they simply didn’t have it. In our modern era of collaborative robotics, however, vision systems are becoming a necessity as we implement robots into more complex jobs and tasks. While we have some solutions in place, the future’s looking bright for vision technology in robots.

The Future of Vision Technology in Collaborative Robots

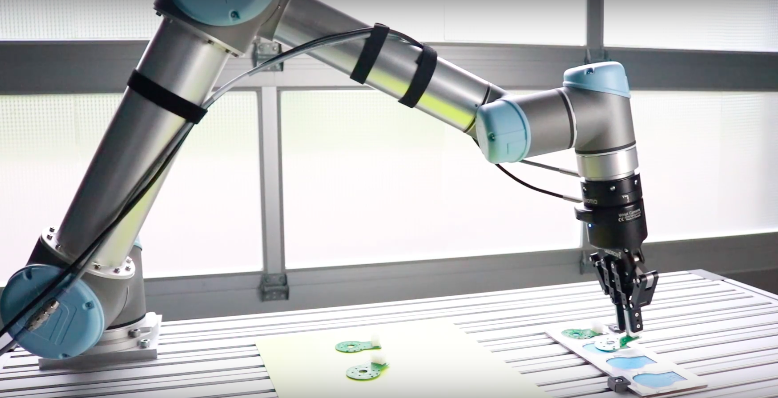

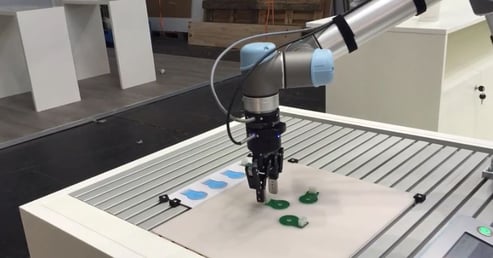

For some time now, robots have had 2D vision systems to detect movement, locate parts in a certain area, and perform other tasks. While these 2D options are nice, and very impressive, they have a limited scope in the grand scheme of things.

The next step is to move into the 3D world. An early use of this technology is the infrared sensors that some robot models have been equipped with. A combination of both 2D and 3D vision systems will allow robots to handle more complex tasks.

Consider these benefits of a robot with 3D vision:

● Record and measure depth

● Precise handling of components at high speeds

● Easier to process, troubleshoot, and maintain for engineers

● Improve management of small components

● Far less odds of collisions

Currently, early 3D systems use multiple 2D perspectives to build the image. Passive imaging, for example, uses two cameras mounted on the robot itself. Motion stereo uses one camera, but builds its image based on images from two or more locations.

The biggest issue that manufacturers faced until recently, was a lack of calibration methods. When they shipped out robots, everything was in a pre-calibrated state. One wrong move would send the vision system out of alignment.

The biggest issue that manufacturers faced until recently, was a lack of calibration methods. When they shipped out robots, everything was in a pre-calibrated state. One wrong move would send the vision system out of alignment.

Throughout 2017 and beyond, new 3D vision systems will incorporate the ability to self-correct and re-calibrate. Furthermore, new devices will have advanced triangulation techniques that calibrate without technical assistance.

The future is certainly focused on vision systems. According to Avinash Nehemiah, the product marketing manager for computer vision at MathWorks: “It can be attributed to a reduction in the cost of vision sensors, the maturation of vision algorithms for robotic applications, and the availability of processors with vision-specific hardware accelerators.”

Over the past several years, collaborative robots have begun to use vision systems as the primary method of environmental perception, or as part of a larger sensor setup. Working together with The Robotics and Mechatronics Center at the German Aerospace Center, Mathworks was able to create Agile Justin.

Agile Justin is a two-armed, mobile humanoid robot who can perform assembly tasks. He has 53 degrees of freedom and understands surrounding areas with stereo 2D cameras and RGB-D sensors in the head, along with torque sensors in the joints and tactile sensors in the fingers.

The 2D cameras on the robot are mounted side-by-side to allow Justin to see in 3D. While still using a 2D solution, Agile Justin represents the future and the applications of 3D vision systems. Technology is making it more and more of a reality as algorithms that can process 3D data emerge.

Final Thoughts

Collaborative robots have basic vision systems, but in the coming years, they will evolve to have 3D vision that will allow them to perform tasks better and learn new types of jobs we haven’t considered until this point.

What types of vision technology are you keeping your eyes on? Let us know in the comments!

Leave a comment