How tactile sensing improves model performance

Vision-language-action models are the current state of the art in robotic manipulation. They still cannot pick up a potato chip...

From Physical AI to operational AI

Artificial intelligence has brought enormous excitement to robotics.

Robots can now walk, navigate complex environments, and...

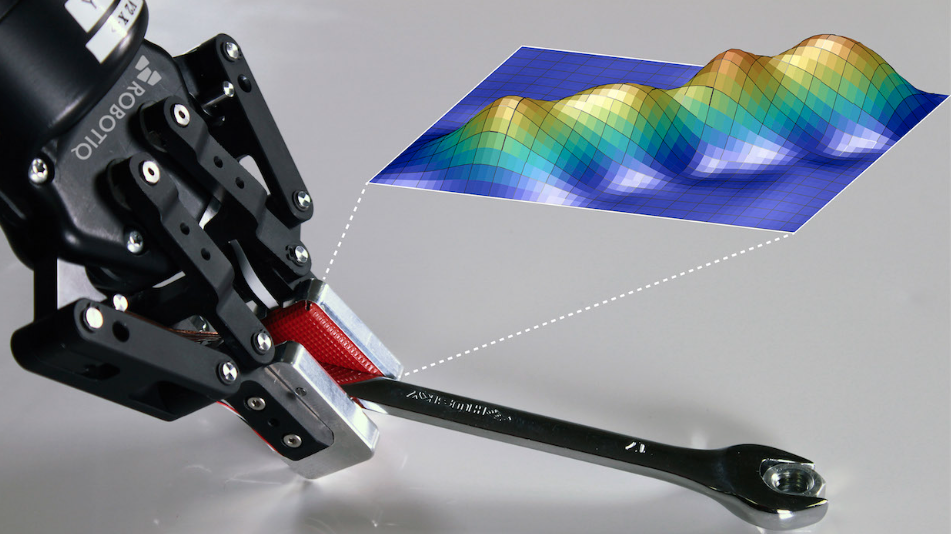

Robots can see. But they still can't feel.

Artificial intelligence has dramatically improved how robots perceive the world.

Computer vision allows robots to detect...

Why Physical AI needs better hardware, not just better models

Artificial intelligence is moving fast. Large language models can write emails, summarize reports, and generate software code...

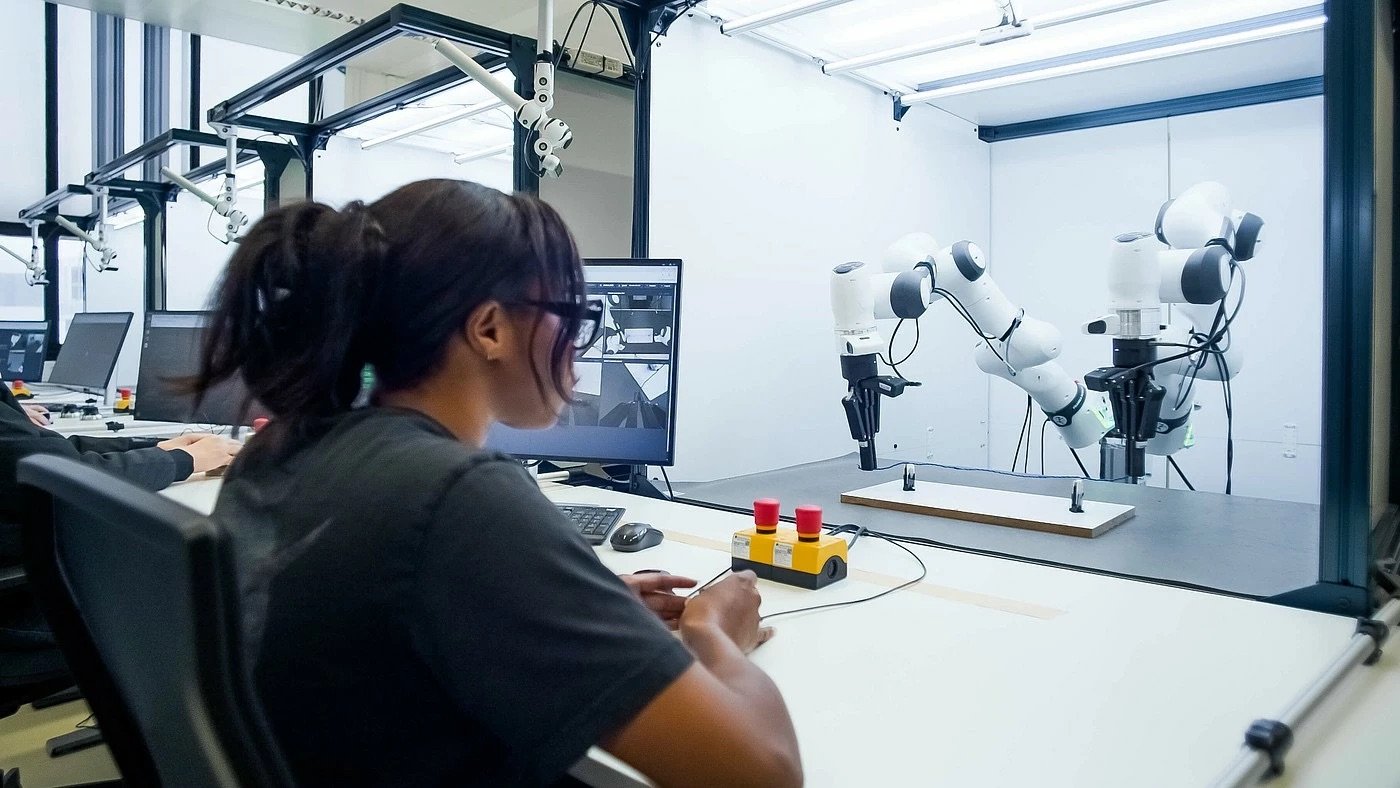

Why the gripper is the true interface between AI and the physical world

Artificial intelligence is transforming robotics. Vision systems can identify objects, machine learning models can plan...

Physical AI hardware: The missing layer between AI models and real-world manipulation

Artificial intelligence can generate actions.

Physical AI hardware determines whether those actions succeed in the real world.

...

Robots that feel: why touch is the next frontier in Physical AI

Physical AI has moved past proof-of-concept. Large models, better simulation, and faster hardware have pushed embodied...

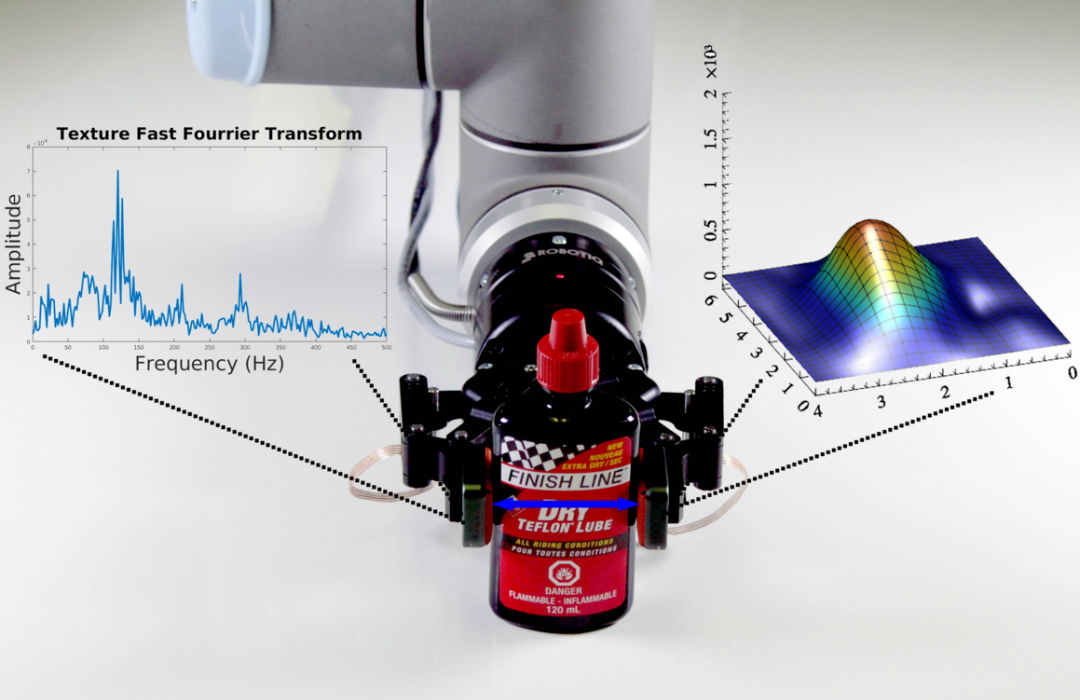

Robotiq brings the sense of touch to Physical AI

Physical AI has reached a critical point. Robots can see, plan, and decide better than ever—but manipulation in the real world...

When digital twins meet lean palletizing on the factory floor

CES is often associated with consumer technology and futuristic concepts. At CES 2026, the focus also included something...

1_2026_Siemens_UR_demo_CES2026.gif)

Leave a comment