Why the gripper is the true interface between AI and the physical world

Posted on Mar 12, 2026 in Physical AI

4 min read time

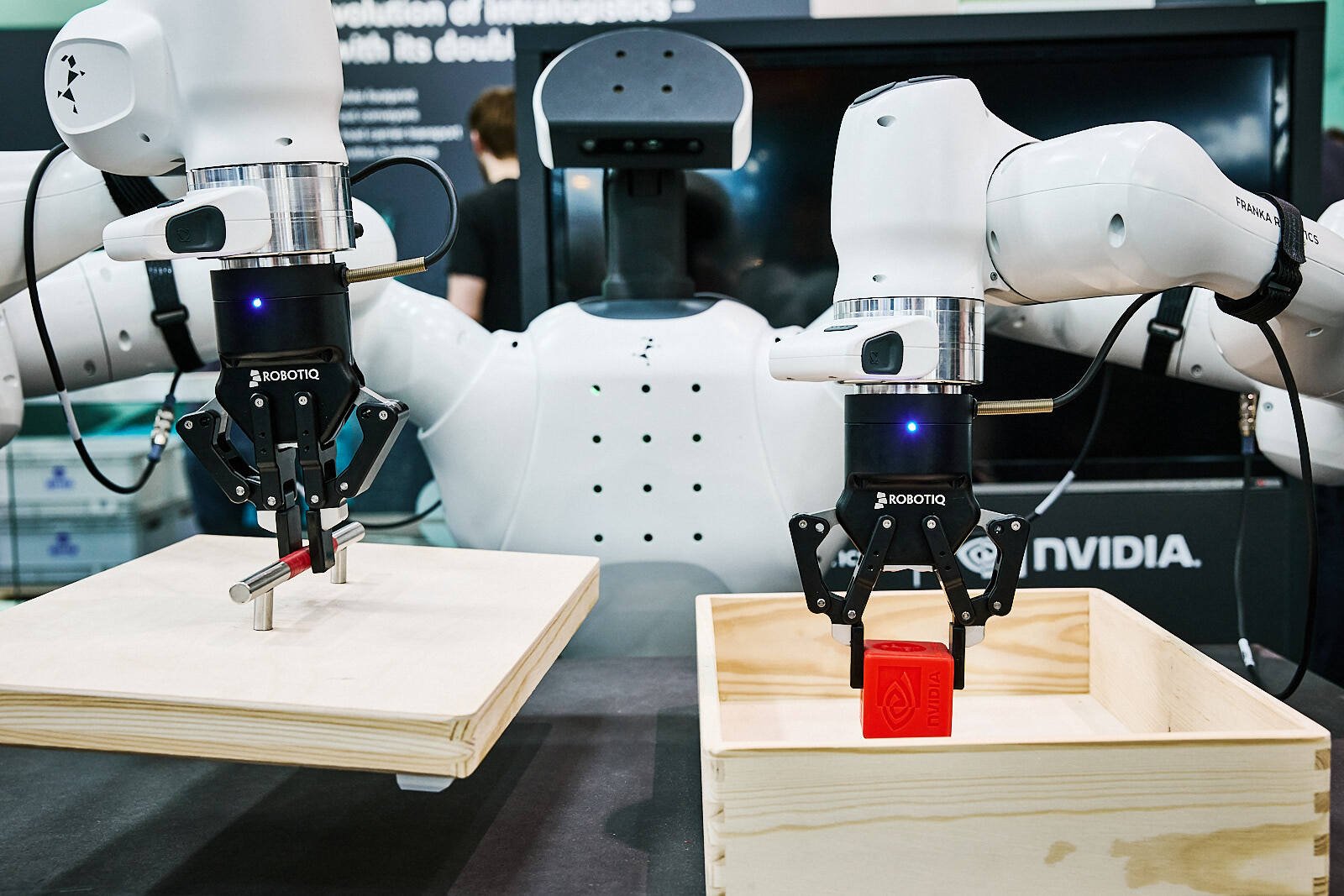

Artificial intelligence is transforming robotics. Vision systems can identify objects, machine learning models can plan motions, and digital twins can simulate entire production environments.

But for all the progress in AI, there is a moment where intelligence must leave the digital world and interact with reality.

That moment happens at the gripper.

In robotics, the gripper is often seen as a simple accessory attached to the robot arm. In reality, it plays a far more critical role. The gripper is the physical interface where AI decisions meet real-world physics.

Without a capable gripper, even the most advanced AI cannot successfully interact with the physical world.

From intelligence to action

Modern AI systems are increasingly capable of translating visual input directly into robotic actions.

Instead of relying on multiple independent systems—one for vision, another for grasp planning, and another for motion—many new models learn to map perception directly to action. A camera observes the scene, and the AI determines how the robot should move to interact with an object.

This shift is making robotic systems more adaptable and easier to deploy in environments where objects and conditions constantly change.

But even as intelligence becomes more integrated, the moment of action still happens in the physical world.

No matter how advanced the AI model becomes, success still depends on whether the robot can physically grasp the object. That responsibility falls to the gripper.

The gripper is where the AI’s decision becomes a real interaction with matter.

If the grip fails—because the object slips, deforms, or behaves unexpectedly—the system must recover. The robot may need to gather more information, replan its motion, and attempt the task again.

Each failure adds complexity, time, and uncertainty to the process. Even when nothing is damaged, the cost of recovery can quickly accumulate.

In many cases, the gripper becomes the true bottleneck in robotic manipulation. AI may determine what action to take, but the reliability and capabilities of the gripper determine whether that action succeeds in the physical world.

The complexity of the real world

In simulation, grasping an object can look straightforward. Objects have defined shapes, friction behaves predictably, and conditions remain constant.

On the factory floor, reality is different.

Products vary slightly in size or shape. Packaging materials deform. Objects shift during transport. Surfaces may be slippery, porous, or fragile.

This variability makes grasping one of the hardest problems in robotics.

Even if an AI system perfectly identifies an object, the gripper must still handle:

- Differences in object geometry

- Variations in weight distribution

- Changing surface conditions

- Dynamic environments such as moving conveyors

A gripper must therefore be adaptive, forgiving, and robust.

Without these characteristics, AI systems struggle to translate intelligence into reliable action.

Why the gripper matters more as AI improves

As AI systems become more capable, the expectations placed on robotic manipulation increase.

AI can now detect a wide variety of objects and predict grasp points in real time. However, if the gripper cannot handle that variability, the potential of AI remains limited.

In other words, better AI requires better physical interfaces.

The gripper must support the flexibility that AI enables.

For example, modern robotic systems increasingly need to handle:

- Mixed-product palletizing

- Random bin picking

- Variable packaging formats

- Rapid product changeovers

In these scenarios, the gripper must handle many shapes and materials without requiring constant mechanical adjustments.

This is why gripper design is becoming a strategic component of intelligent automation.

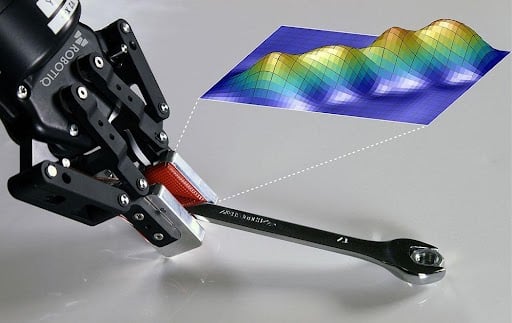

Sensors, feedback, and physical intelligence

The gripper is also where robots can gather valuable physical information.

While cameras and vision systems observe the environment, grippers can feel it.

Through sensors and feedback mechanisms, grippers can detect:

- Contact with objects

- Grip force

- Slippage

- Surface compliance

This information allows robotic systems to close the loop between perception and action.

Instead of blindly executing commands, robots can adjust their behavior in real time—tightening a grip, repositioning an object, or aborting a failed grasp.

In this way, the gripper becomes a source of physical intelligence, feeding data back into AI systems and improving performance over time.

Designing the bridge between digital and physical

To unlock the full potential of AI-driven robotics, manufacturers must think of the gripper not as a peripheral component, but as a core interface layer.

A well-designed gripper should:

- Handle a wide range of objects

- Adapt to variability in materials and shapes

- Provide feedback to the robot system

- Integrate seamlessly with perception and control systems

When these capabilities come together, the gripper becomes the bridge between digital decision-making and reliable physical execution.

The overlooked key to intelligent automation

Much of the discussion around AI in robotics focuses on software, algorithms, and computing power.

But real-world automation depends on something simpler and more fundamental: the ability to grasp objects reliably.

The gripper is where intelligence meets physics. It is the moment where data turns into action.

As robotics continues to evolve toward more adaptive, AI-driven systems, the importance of this interface will only grow.

Because no matter how advanced the AI becomes, the robot still needs a way to touch the world.

-modified.png)

Leave a comment