Vision-only manipulation is hitting a wall

Posted on Apr 30, 2026 in Physical AI

3 min read time

In 2016, I said something that went against where robotics was heading at the time: vision alone doesn’t work for grasping.

Not “it needs improvement.” Not “the tech isn’t there yet.” It doesn’t fit the problem.

Grasping is physical. Contact, force, friction. Vision can guide the approach. It can’t feel what happens next.

Back then, we saw it in the lab. Tactile vibration data predicted grasp failure with 83% accuracy and detected slip at 92%. Early results, but clear enough. The signals that matter don’t show up in images.

Ten years later, the rest of the field is running into the same limit.

Vision gets you close

Vision still matters. It handles detection, positioning and planning. It gets the robot to the right place, lined up the right way.

It does that well, but manipulation doesn’t stop when the gripper reaches the object.

That’s where things break.

What happens at contact isn’t visible

Before contact, the robot is working off images.

After contact, it’s dealing with forces.

A bad grasp doesn’t start as a visual change. It shows up as a shift in force. Slip starts in the fingertips before anything moves enough to see. Too much pressure shows up in the wrist before the object deforms.

By the time a camera picks up a problem, it’s already happening.

Vision sees outcomes. Contact sensing measures interaction as it happens.

And the useful data lives right there, at the moment of contact.

The evidence is already there

This isn’t a theory anymore.

Tactile-driven policies beat vision-only ones on tasks that involve force. Benchmarks like ManiSkill-ViTac show better performance when you combine vision with tactile input, especially in insertion and assembly. Models like π0, OpenVLA, and Octo depend on synchronized inputs from multiple sensors. Remove force or tactile data, and performance drops.

No one is replacing vision. They’re adding what’s missing.

The strongest systems today combine vision, proprioception, force, and touch into a single model.

That’s what moves performance.

Vision has already given most of what it can

Vision still carries a lot of the system. But it doesn’t solve the hard part.

Physical AI improves with more data, but not all data matters the same. Force and tactile signals have an outsized impact on how well a system handles real contact.

Most datasets still lean heavily on vision and joint data.

So you see the same pattern over and over. Robots reach the right position. Then struggle with insertion, assembly, and anything that depends on compliance.

The missing information is physical.

Tactile data hasn’t scaled yet

Collecting good contact data hasn’t been easy. You need instrumented end effectors, reliable force and tactile sensors, tight synchronization, and consistent formats.

That’s a hardware problem as much as a modelling one.

Until recently, the infrastructure wasn’t there.

Now it is.

The bottleneck is how fast teams can deploy it and start collecting data.

Closing the loop

What started as a claim in 2016 is now showing up everywhere.

Robots that only see will keep hitting the same limits. Robots that can feel will start to close the gap.

Vision stays. It’s not going anywhere.

But it won’t carry manipulation on its own. The shift comes from adding the signals that matter at the point of contact.

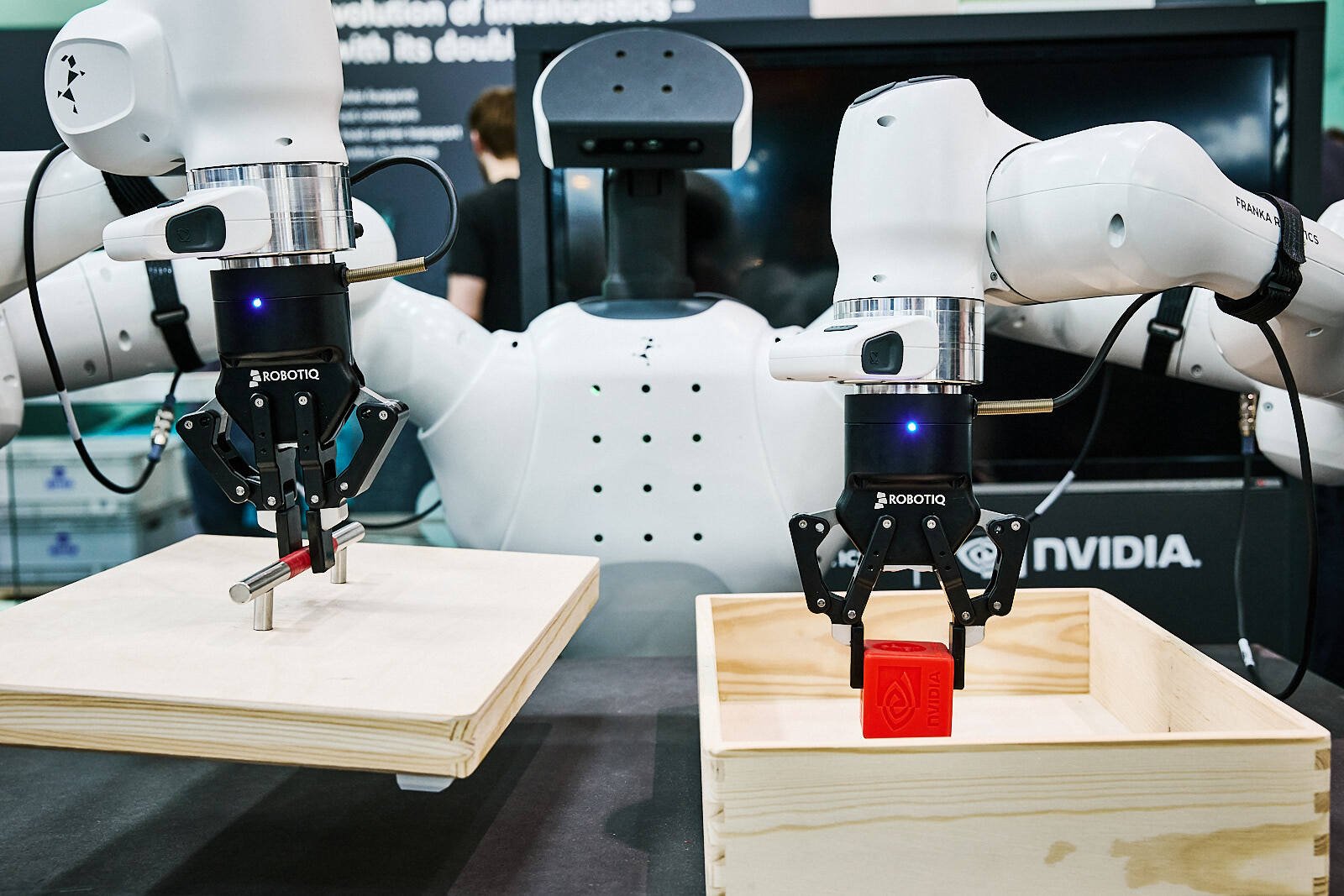

At Robotiq, our tactile sensors are built to capture those signals directly at the gripper, so robots see and feel what they’re doing.

-modified.png)

Leave a comment