INTELLIGENT ROBOT BECOMES BANK LOBBY MANAGER - What's New in Robotics This Week - Sept 25

This week's most innovative, avant-garde or novel robotic news stories include:

Sassy Chinese bank robots; developing robotics standards; machine ethics; FAA drone exemptions... and more.

Intelligent robot ‘Jiaojiao’ becomes bank lobby manager (People's Daily)

On Monday of this week China's People's Daily carried the funniest little human-robot exchange you're likely to hear all year.

Jiaojiao: The world's first robot with tyrant detection capabilities?

In a brief story about Jiaojiao, a $15,700 USD, 90 cm robot receptionist that has started helping customers at Bank of Communications' Shanghai office, the following real-world exchange was reported:

Client: "Where should I go if I want deposit 100,000 yuan?" [Around $15,665 USD]

Jiaojiao: "100,000, that's a huge amount. I suggest you go to the counter, tyrant.”

An opinionated little robot with a devilish sense of humor working in customer service? This can't end well.

As the paper reports: "With 'Jiaojiao', waiting in the bank is no longer boring."

You can say that again!

(A more detailed story about the Jiaojiao, which was developed by the Nanjing University of Science and Electronic Information Technology Corporation and a consortium of Chinese tech companies, is available on Yibada.)

Call for Robot Standards (RoboHub)

Photo: Vecna website

Daniel Theobald, CTO of the robotic logistics and technology firm, Vecna called for greater collaboration on standards for the robotics industry in an interview published this week:

It’s very important for us, as an industry, to understand that sharing and collaborating on these types of standards benefits everybody. It reduces the friction to robotics adoption, meaning that it will be more cost effective for customers to buy robots, which means that there will be more robots and that benefits everybody.Theobald is correct. Additionally, standards provide a degree of legal and regulatory security for roboticists and other innovators to work within, safe in the knowledge that their technology doesn't break any laws.

Insurers also feel more secure working in strictly regulated environments that leave little room for interpretation.

Meanwhile, greater standardization means less reinvention of the wheel every time a new robot is designed.

Theobald continues:

[...] collaborating on standards is important. The computer industry would not be where it is today if every single computer operated differently. If you had to buy software only from the company, that you bought the hardware from, the internet wouldn’t exist; the ability to download software from multiple vendors and app stores, that wouldn’t exist.

It’s too late to give machines ethics – they’re already beyond our control (The Guardian)

Artificial intelligence is already developing for its own benefit and not for ours, writes Sue Blackmore in an opinion piece for The Guardian:

The fact is that all intelligence emerges in highly interconnected information processing systems; and through the internet we are providing just such a system in which a new kind of intelligence can evolve.

This way of looking at AI rests on the principle of universal Darwinism – the idea that whenever information (a replicator) is copied, with variation and selection, a new evolutionary process begins. The first successful replicator on earth was genes.

The view that AI is already developing for its own benefit and not ours is one shared by Demis Hassabis, founder of AI firm DeepMind which was acquired by Google in 2014.

Just as consciousness emerged from neuronal interconnections, a new intelligence is emerging from "the gazillions of interconnections we have provided through our computers, servers, phones, tablets and every other piece of machinery that copies, varies and selects an ever-increasing amount of information".

The main problem presented by this emerging AI is the problem all replicators present, as Blackmore explains:

Replicators are selfish by nature. They get copied whenever and however they can, regardless of the consequences for us, for other species or for our planet. You cannot give human values to a massive system of evolving information based on machinery that is being expanded and improved every day. They do not care because they cannot care.

In one sense describing AI as 'selfish' is just as misplaced and anthropomorphic as giving your Roomba a name.

But there are important implications when we come to consider the extent to which we will be able to control AI and our ability to differentiate between human intelligence and its AI equivalent.

Can an AI ever experience human-like desire or intentionality? Or will AIs always, merely follow instructions?

And what, if any, are the fundamental differences between a robot following instructions to meet a target and a human following his or her desires?

Finally, if, as some argue, human ethical decision-making is at least partly based on desire and emotion, then how can we implement ethics in a device that is incapable of neither desire nor emotion?

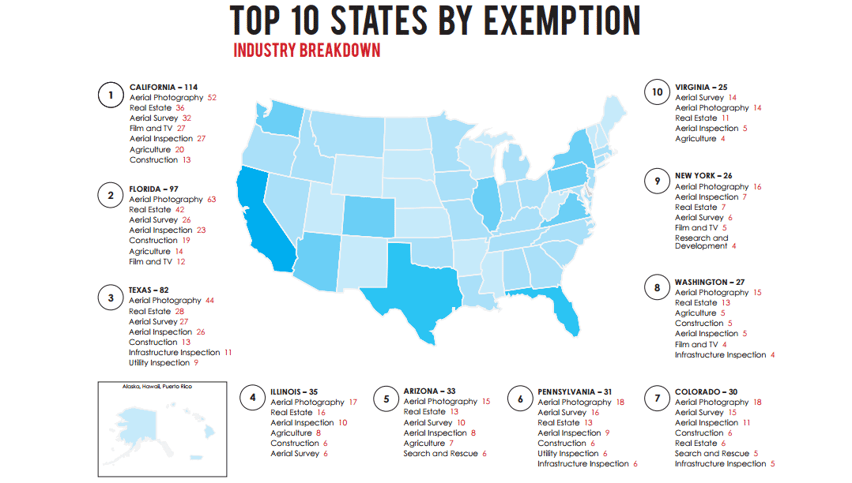

FAA Breaks Down Winners, Losers In Growing Drone Market (Fortune)

Image: AUVSI

Interesting piece from Fortune on the recent Association for Unmanned Vehicle Systems International (AUVSI) report on the FAA's "Section 333 exemptions" which allow successful applicants to operate in the U.S. national air space:

The most interesting data in AUVSI’s analysis has nothing to do with the state-by-state breakdown and everything to do with the kind of companies applying for commercial drone permits nationwide. “At least 84% and as many as 94.5% of all approved companies are small businesses”, the report says.

And Also...

Driverless Pods Arrive In The Netherlands (The Telegraph); How Etsy can strengthen America’s small-scale manufacturing sector (Brookings); Will Sexbots Devalue Our Human Relationships? (Big Think); New advanced robot to join cleanup effort at Fukushima No. 1 nuclear plant (The Mainichi); Hackers Launch Balloon Probe Into the Stratosphere to Spy on Drones (Wired)

Leave a comment