What Is Dynamic Tactile Sensing?

When it comes to robots that are built to handle objects, tactile sensing is one of the keys to success. Obviously, it’s helpful for a robot to feel what it’s holding and understand its grasp position, so it can manipulate the object properly. But what you may not realize is that tactile information is not only about sensing static events, such as contact location or forces – it’s also about sensing dynamic events.

In this post, you’ll learn about the difference between dynamic and static sensing, and see why dynamic tactile sensing is so important.

Static Sensing

When we humans think of our sense of touch, we might imagine it as a simple pressure-sensing mechanism. Pressure information, which is obtained during static tactile sensing, includes things like the force your hand is exerting on an object while you’re holding it.

Why is this known as static sensing? Because when you have a solid grasp on something, the amount of force your hand exerts on it is relatively steady, and any changes in force happen slowly. Hence, static tactile sensing refers to slow changes in applied forces.

Wait a minute – if “static” means “unchanging,” shouldn’t static sensing be for forces that don’t change at all, rather than forces that change slowly? Well, although the strict definition of the word static is indeed “unchanging,” in tactile sensing applications we’re actually using “static” to refer to relatively low frequencies.

If you look at a tactile event’s spectrum of frequency (or spectrogram), which indicates the amount of change in force per second, static sensing will take place for events in the low frequency range.

Dynamic Sensing

Now you know why static sensing is important. But the human sense of touch is not just about what happens when we’re firmly gripping an object – we also sense tactile information whenever our skin comes in brief contact with a surface, when we throw and catch an object, or when we’re holding something and it slips or falls out of our grasp.

These sorts of events are where dynamic sensing comes into play. It measures information that’s rapidly changing, which is basically anything that involves movement of the sensor, the object, or both.

In human skin, static and dynamic sensing are separate tasks, and they’re done by two different types of mechanoreceptors. Static sensing is mainly done by slow-adapting mechanoreceptors called Merkel’s disks and Ruffini endings. They’re called “slow-adapting” because they are slow to adjust when a stimulus takes place. Consequently, they’re less sensitive to quick changes in stimulation, and they’re better at sensing steady pressure.

Dynamic sensing is done by fast-adapting mechanoreceptors known as Pacinian corpuscles and Meissner’s corpuscles. They’re quick to respond to changes in stimulation, so they’re much better at sensing vibrations.

Why Both Types of Sensing Are Necessary

If you only had static sensing capabilities, you might be able to hold onto an object well enough, but other manipulation tasks would be a different story. Picking an object up or putting it back down again, for example, would be frustratingly difficult without dynamic sensing.

So although static pressure sensing is a big part of the sense of touch, the ability to detect dynamic events is equally important.

Here are just a few of the situations where dynamic sensing can help with robotic grasping and manipulation skills:

Initial Contact

In “Dynamic Tactile Sensing,” researcher Mark Cutkosky describes how dynamic sensing can help robots determine the moment when they first come in contact with an object.

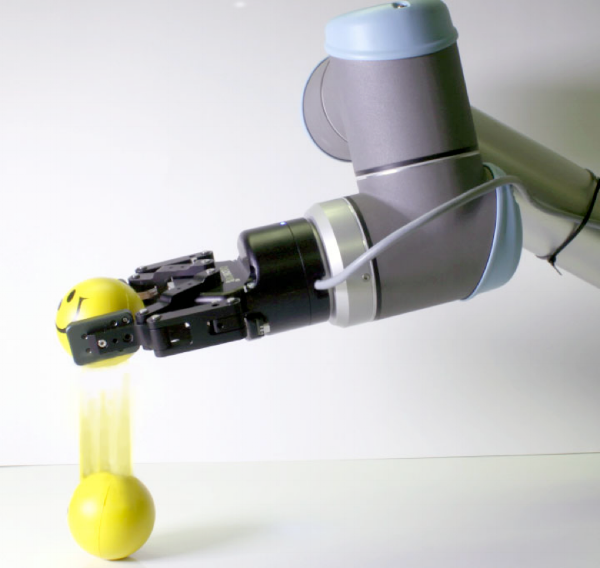

Consider the task of picking up a ball from a table: a ball is a tricky object because the lightest touch can cause it to roll away. With static sensing alone, the robot would most likely cause the ball to roll away before it could sense a high enough level of applied force.

Dynamic sensing is much more useful in this case because it looks at variation in force over time, so even if the robot only applies a light touch, it will be able to recognize this brief moment of initial contact very quickly.

Object Slippage

When an object slips out of a robot’s grasp, it’s a dynamic event because the variation in force happens quickly – quick enough to generate vibrations on the surface of the gripper.

The hard part is distinguishing between vibrations caused by object slippage, and vibrations caused by other events, such as when a robot drags an object across a table (known as object-world slip).

That’s why Jean-Philippe Roberge et al. studied how robots can determine when an object is truly slipping from their grasp, as opposed to when it’s merely a case of object-world slip, using a dynamic sensor that was custom built at ÉTS’s Control and Robotics (CoRo) Lab.

Texture Recognition

Why would we want robots to be able to recognize textures? Two reasons: object recognition, and grasp stability assessment.

First, robots are often used in bin-picking tasks where they have to select the right object from an assortment of different ones. Tactile information about textures can help the robot recognize the right item.

Second, when a robot knows the properties of an object’s texture, it can estimate its surface roughness, which affects the friction between the gripper and the object and impacts the robot’s grasp stability.

The question now is how we can integrate this information so robots can quickly and accurately predict grasp outcomes based on friction on the surface of their tactile sensors.

Multimodal Sensors: the Future of Tactile Sensing

Until recently, most of the tactile sensors built for robots were made simply for static sensing, so they were only able to give a “map” of the applied pressure.

But now, the CoRo Lab has developed a sensor (currently being beta-tested by select Robotiq customers) that can do both static and dynamic sensing. The 28 static-sensing taxels operate at 59 Hz, and the one dynamic sensing taxel operates at 1 KHz.

Clearly, dynamic sensing is an extraordinary tool for roboticists. It enables robots to recognize vibrations, textures, and brief moments of contact with an object, facilitating object-manipulation and recognition tasks. Now all that’s left is for you to go out there and see what your new multimodal sensor can do.

Leave a comment